There was no template for this. We had to invent the mental model before we could design the features.

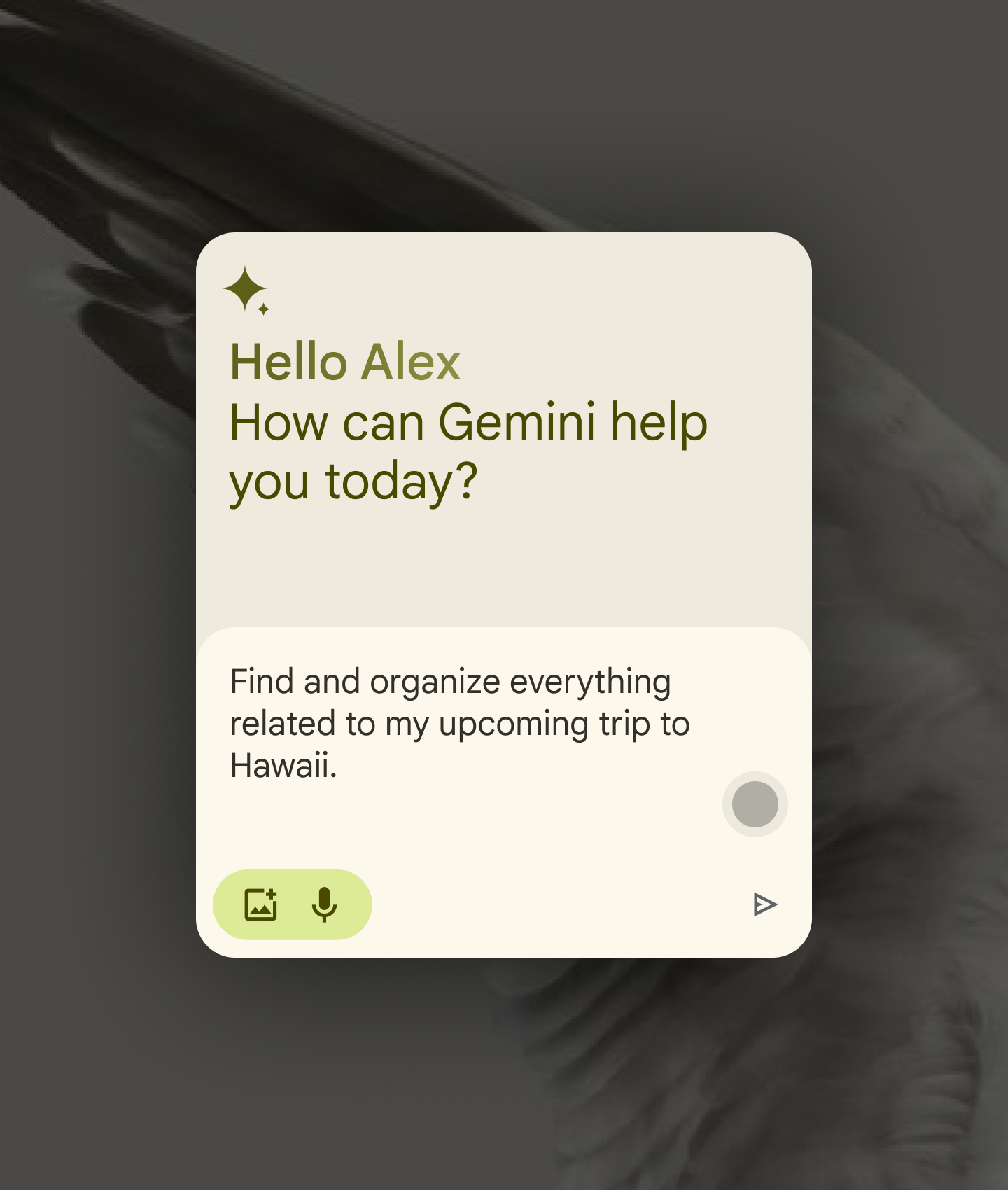

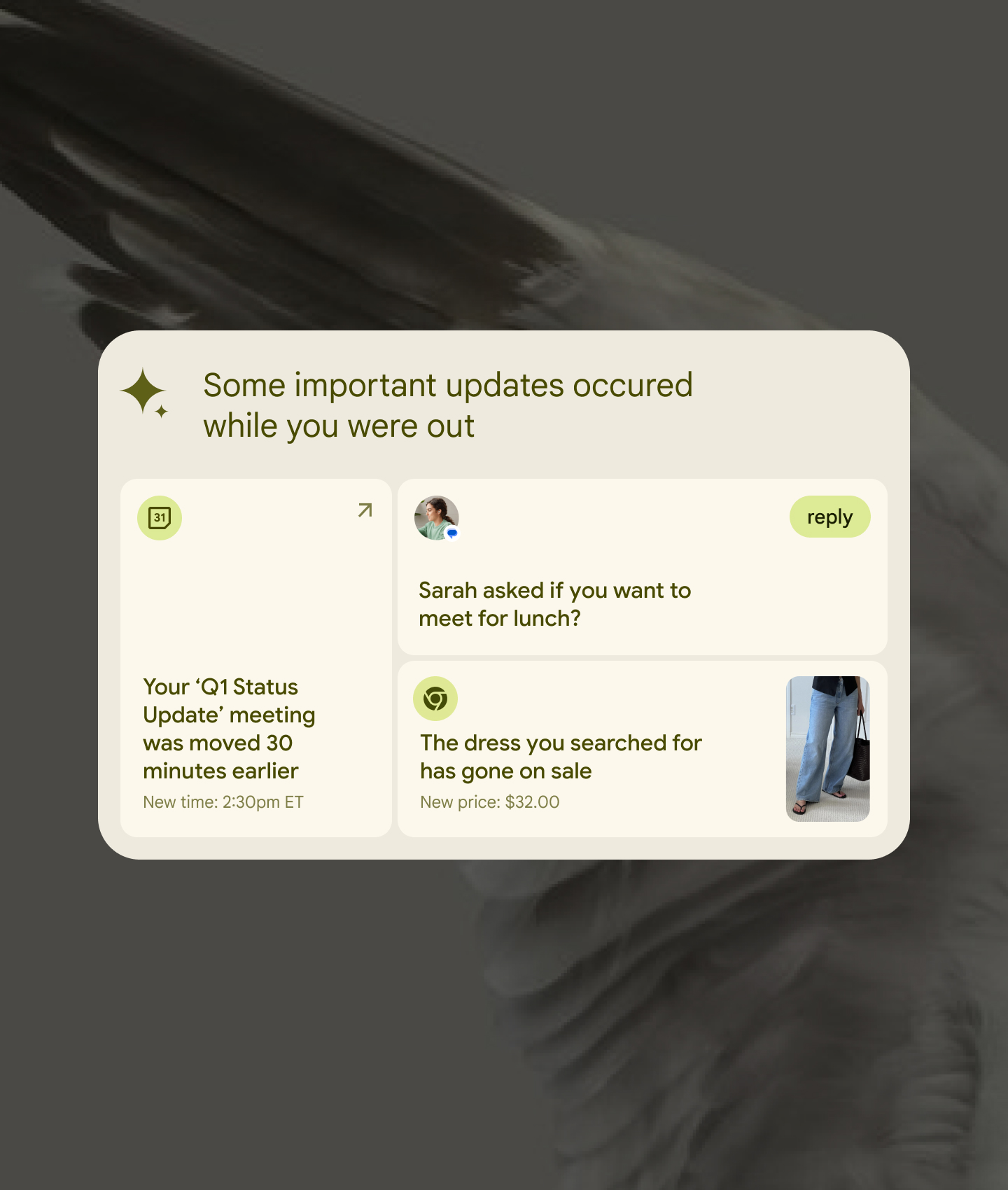

The brief was a provocation: what does it look like when Gemini stops being a feature you open and starts being the intelligence layer of the entire OS? Not a chatbot in a window — an ambient presence that surfaces at the right moment without being asked. There were no established patterns. Every interaction decision required building the design logic from scratch — thinking through each idea against how people actually behave, not how we hoped they would.

The central constraint was restraint. An AI that's everywhere risks feeling like surveillance. The work had to find the line between genuinely useful anticipation and unwanted intrusion — and hold it across every concept. Getting that line right felt less like a design problem and more like an ethics problem.