A multi-year innovation sprint exploring how AI, interactivity, and editorial storytelling could transform Google Search advertising from interruption into experience.

Google Search ads have always prioritized restraint and trust. The challenge wasn't making them more expressive — it was doing that without eroding the thing that makes Search trustworthy in the first place. Can ads be immersive without feeling manipulative? Those are questions I still ask myself.

Over two years I led focused sprints across progressive disclosure, GenAI personalization, and new commercial surfaces. Every concept had to pass the same test: does this serve the user, or just the advertiser? The two aren't always in conflict — but the work only succeeds when you hold that tension honestly.

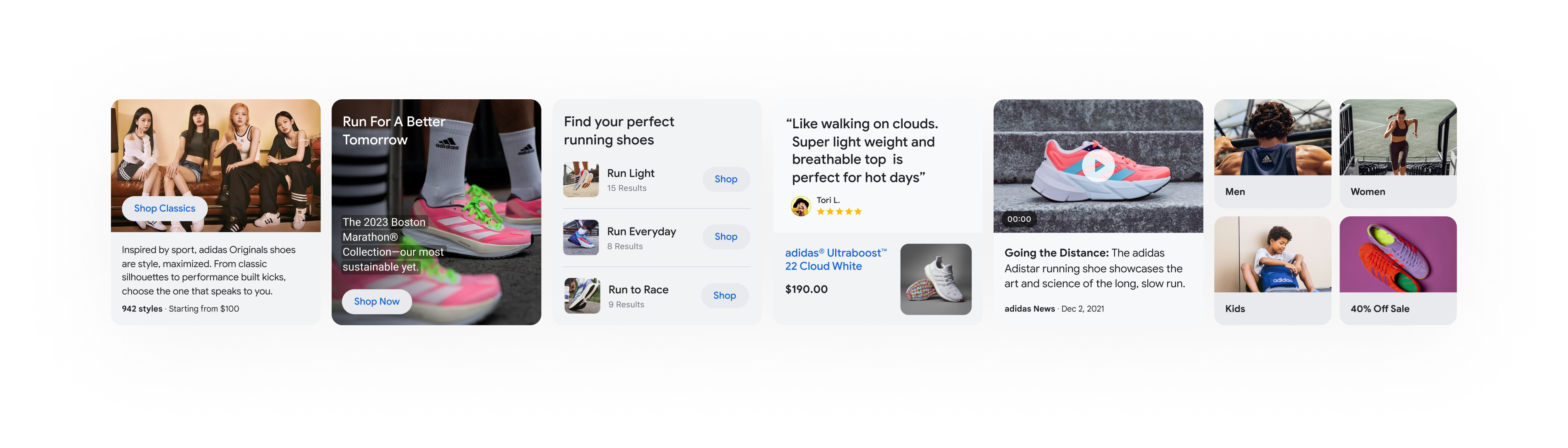

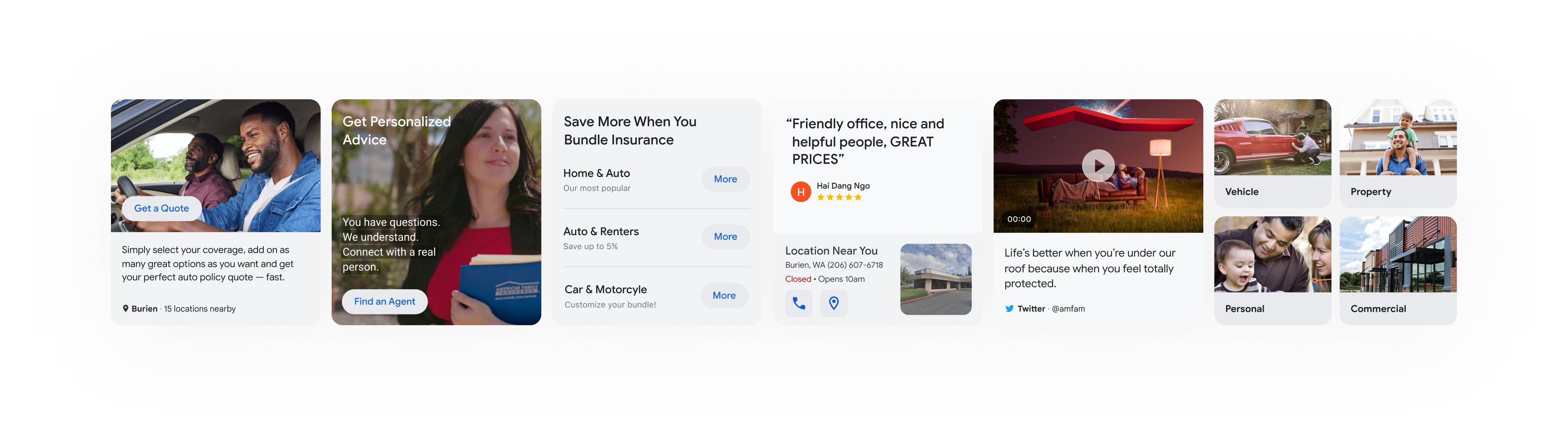

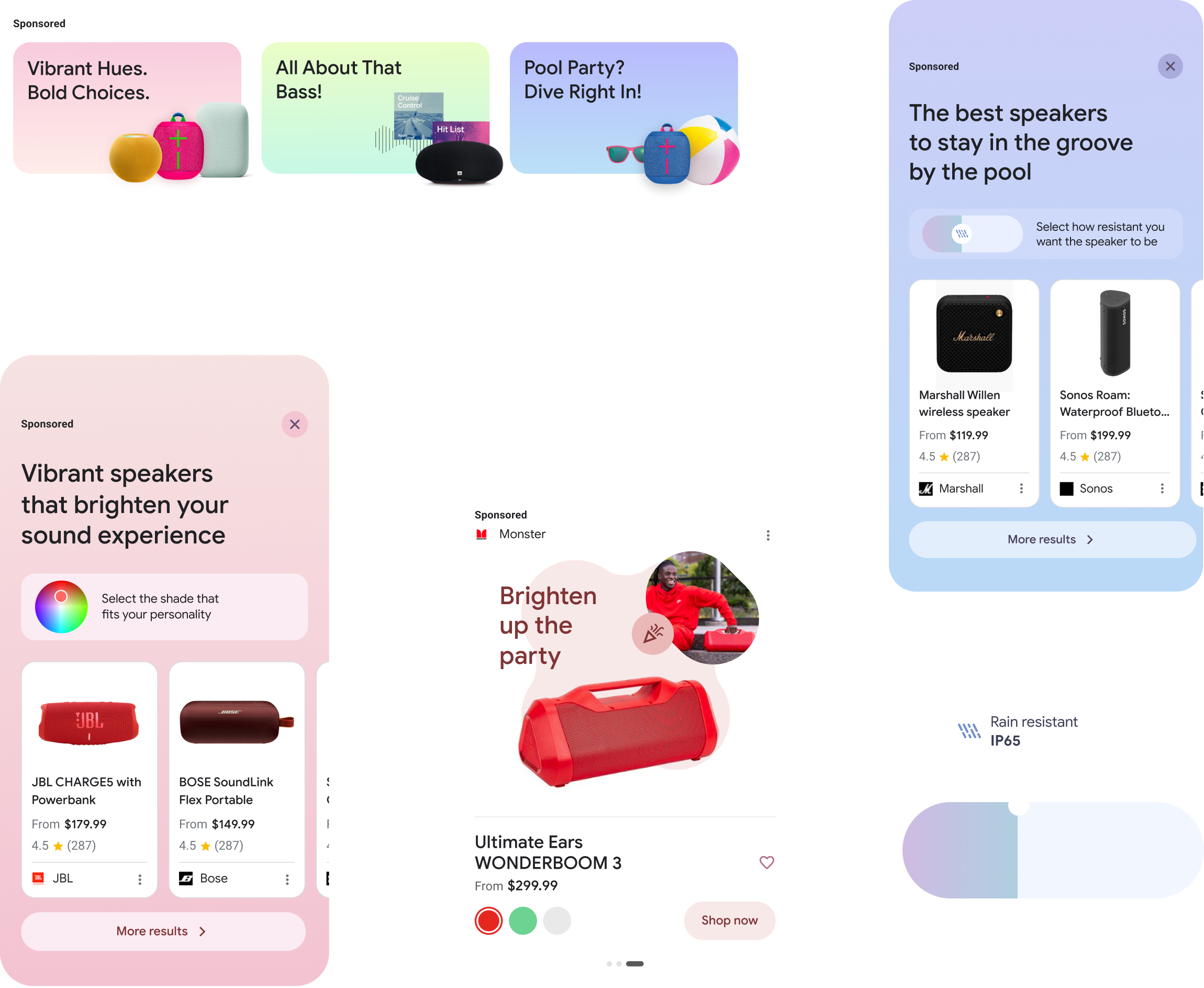

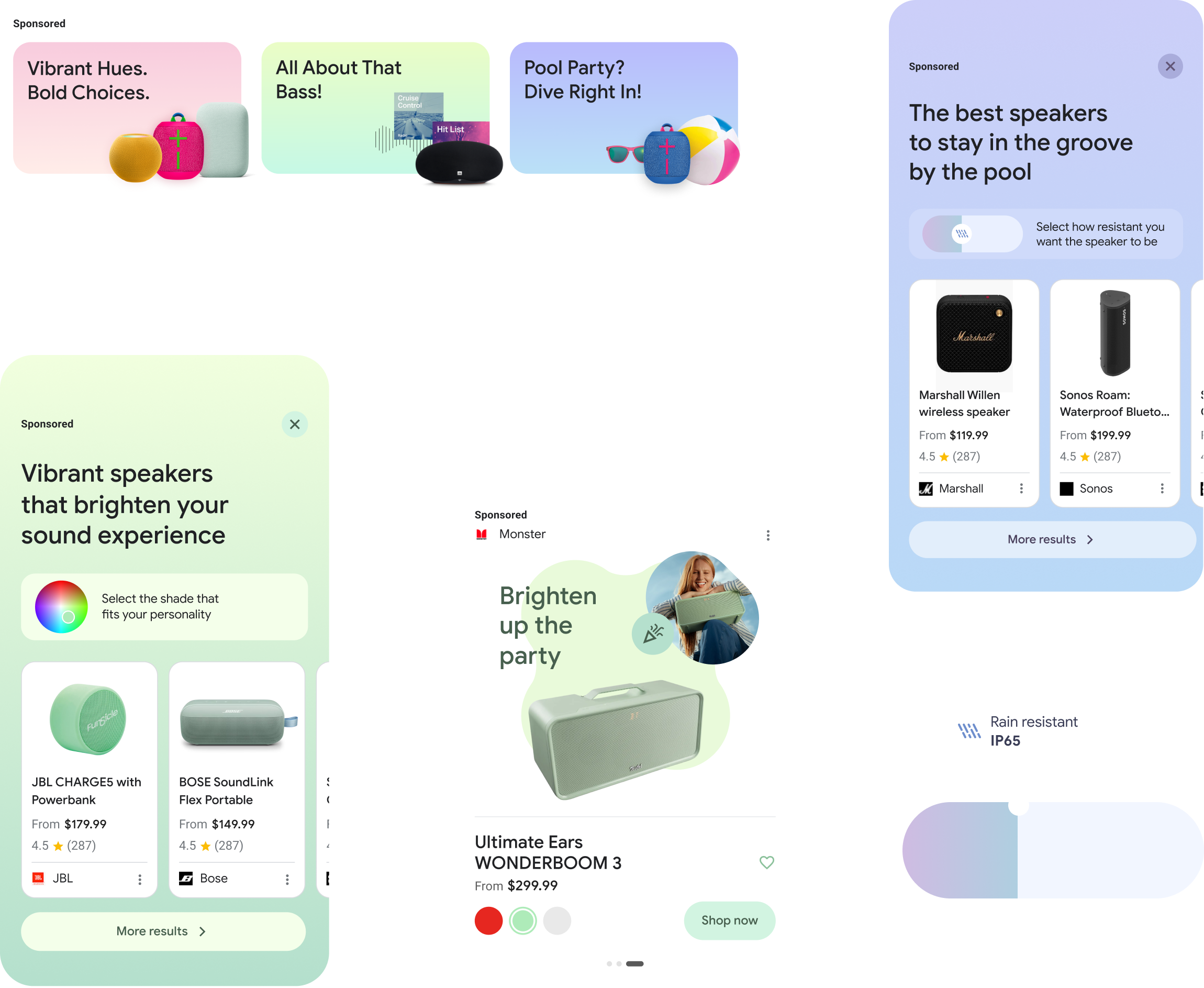

The answer was user intent. Once a person chooses to interact with an ad — a swipe, a tap — the content is allowed to expand and enrich. Swipe to Grow treats that moment of interaction as an invitation, unlocking a horizontally progressive narrative that moves from brand headline to product features to a targeted CTA.

We explored this across multiple verticals — Nike running shoes, Experian car insurance — demonstrating how the format scales across different advertiser types and commercial intents.

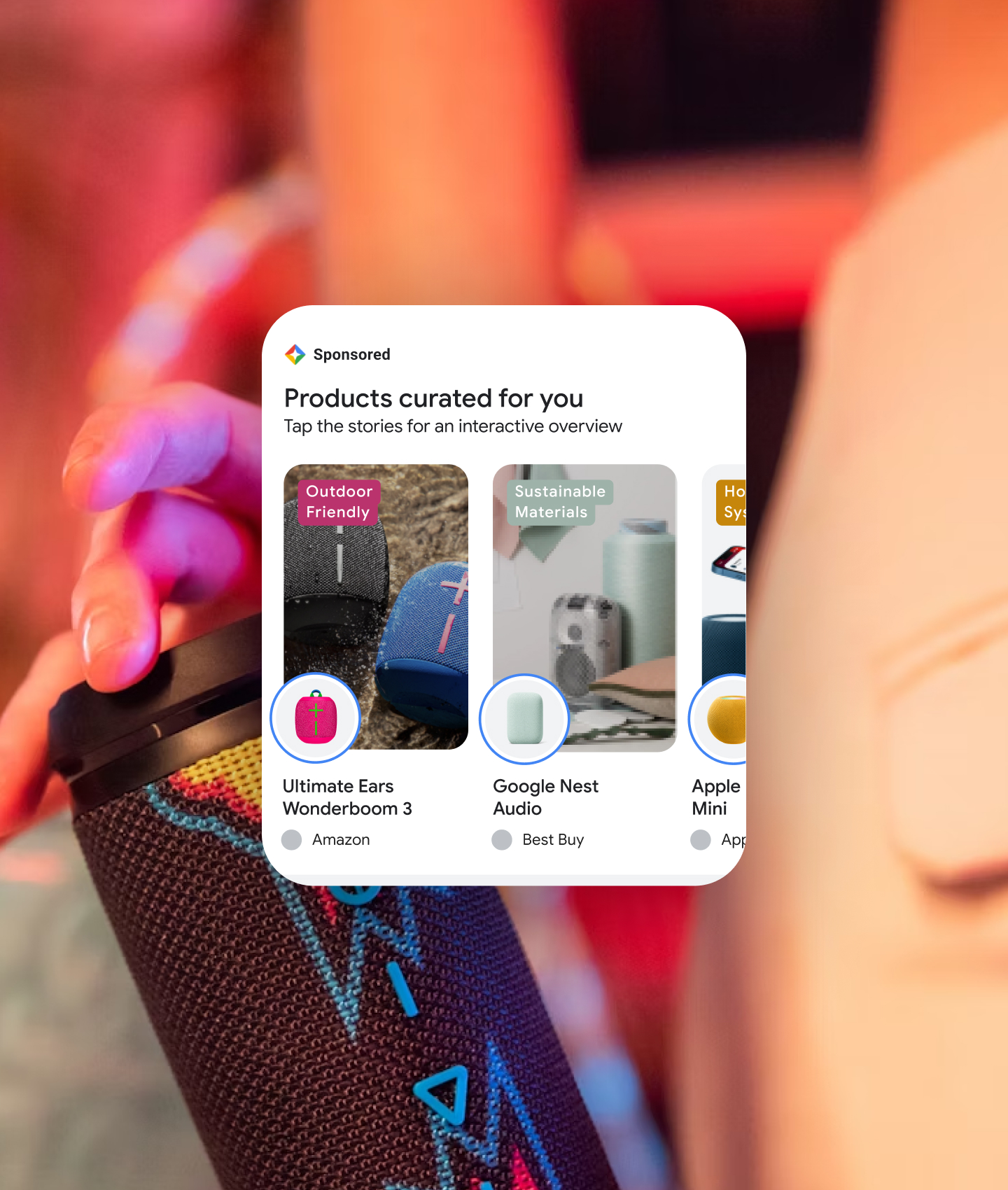

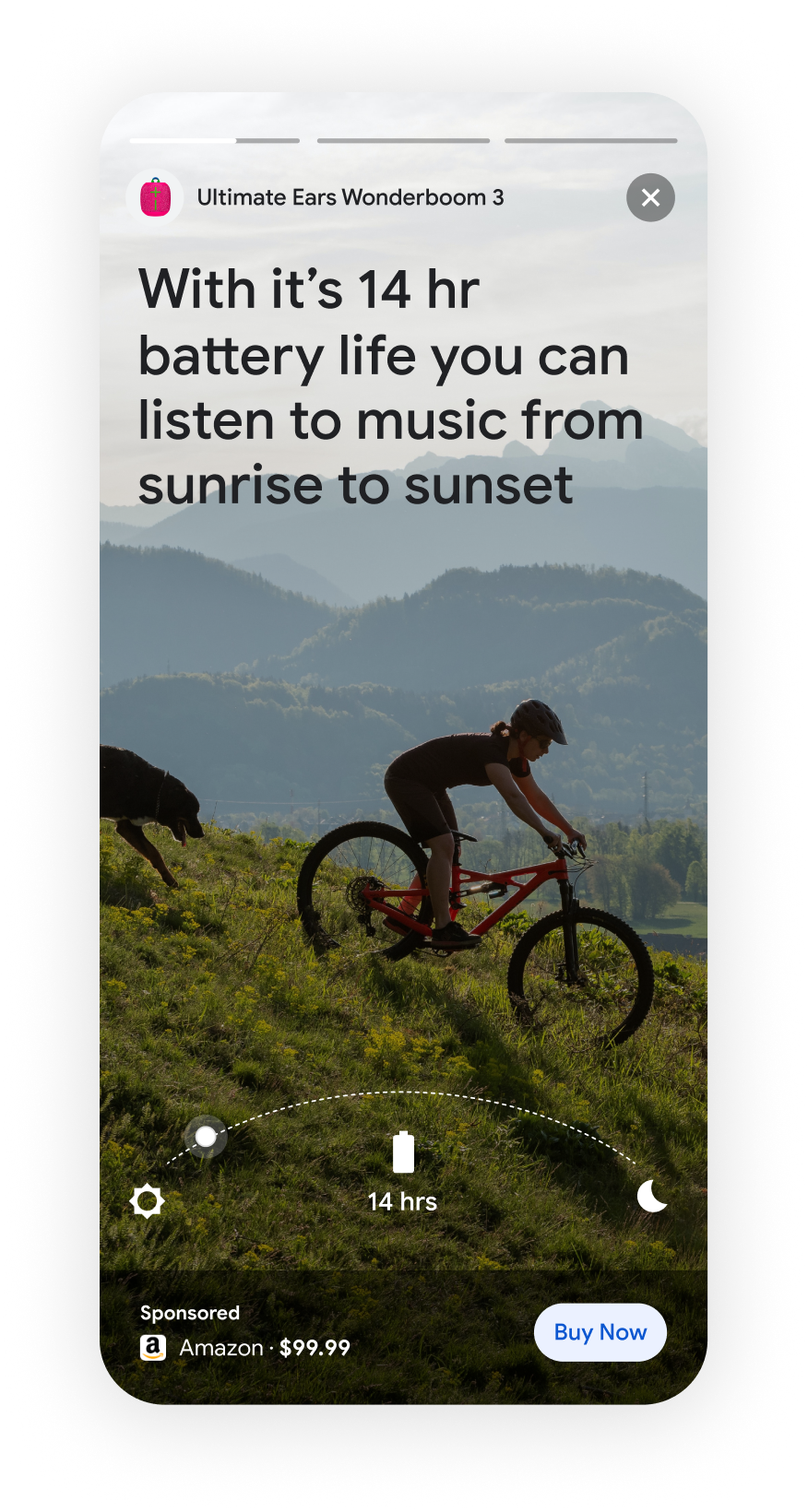

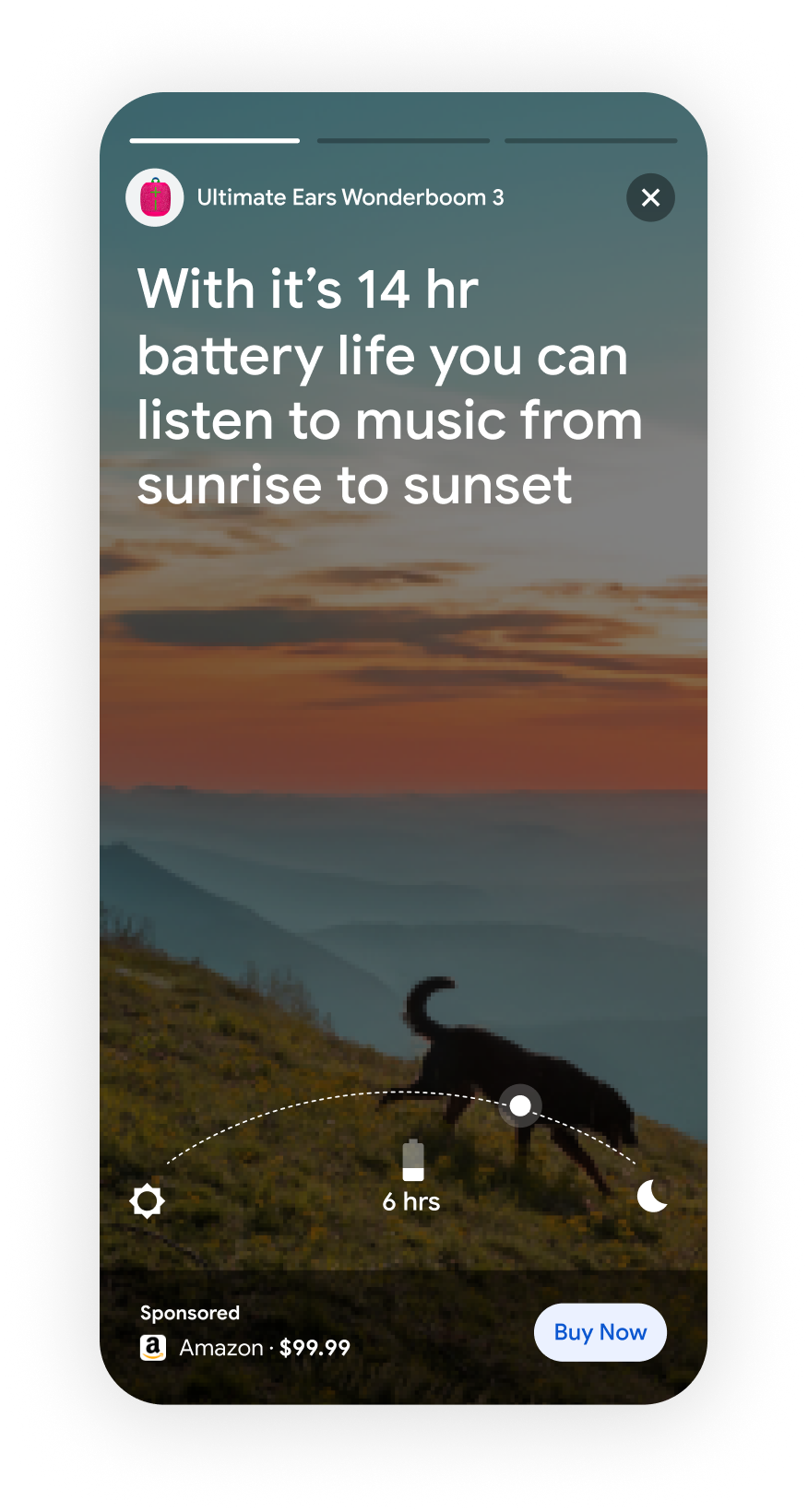

People already consume stories — on Instagram, on Snapchat, on TikTok. This concept asked why that format had never entered Search. Interactive Storytelling takes the moment a user taps a product listing and transforms it into a full-screen brand narrative: cinematic imagery, progressive product details, and a contextual CTA — all generated by AI from the advertiser's existing catalog.

The format respects the constraint: the story only launches on user intent. Nothing intrudes. Everything enriches.

One of the most forward-looking concepts in the sprint: what if a user could configure a product entirely within the ad unit, before ever leaving Google Search?

The Fitbit Sense 2 concept allowed users to swap watch bands, backgrounds, and faces — seeing their customized product in real time, with a contextual buy CTA that reflected their selections. This was designed as a proof of concept for how GenAI could enable truly personalized ad creative at scale.

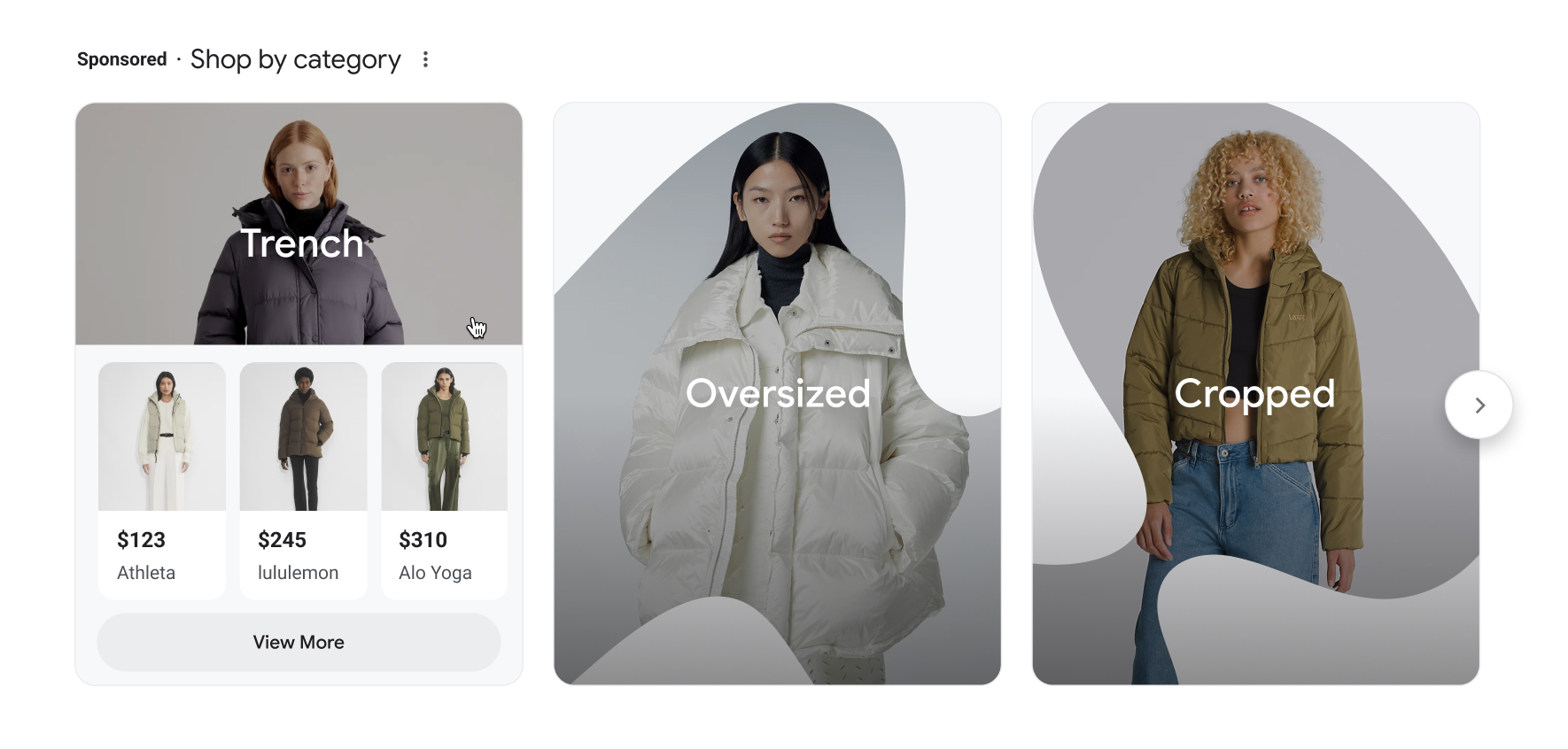

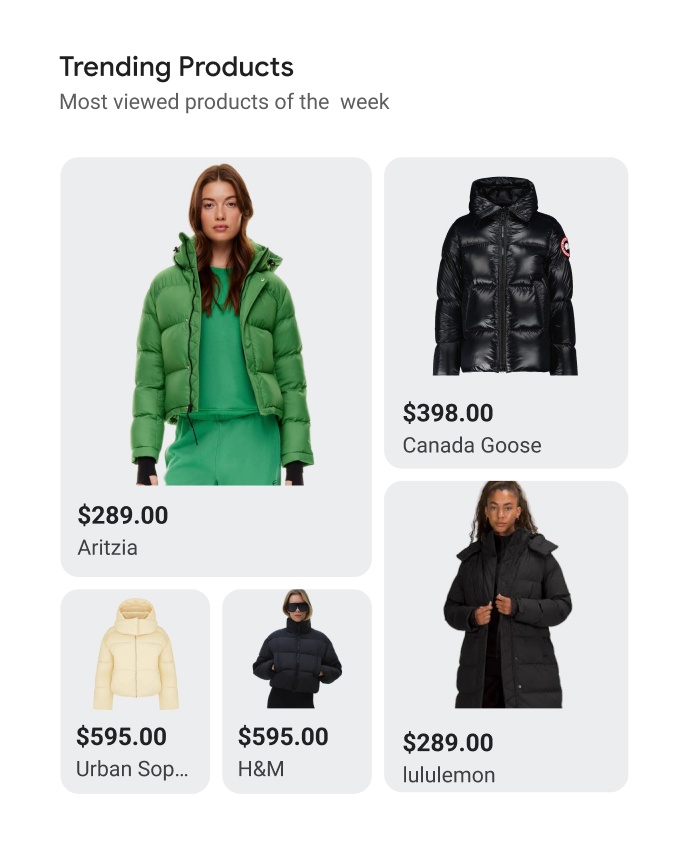

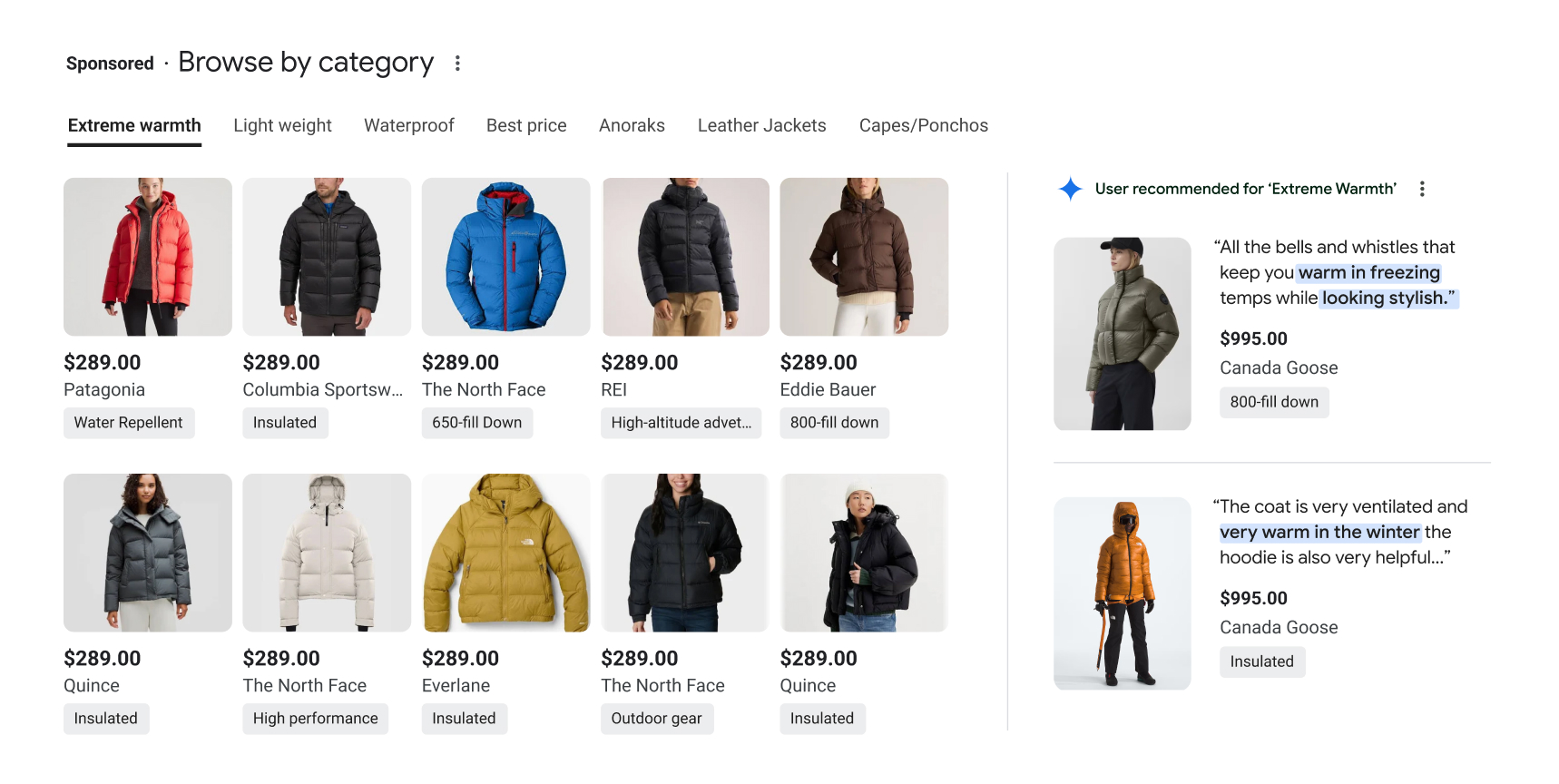

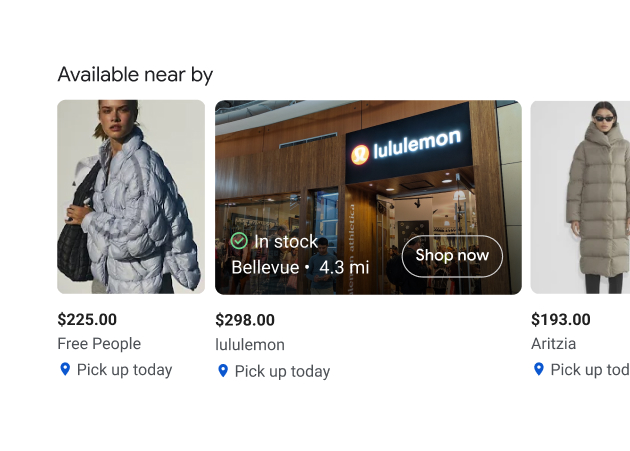

The brief was deliberately narrow: don't touch the PLAs. Instead, design a companion unit that could introduce richer, more personalized content — larger imagery, AI-generated category pairings, related products — in a way that felt native rather than intrusive. The constraint made it interesting.

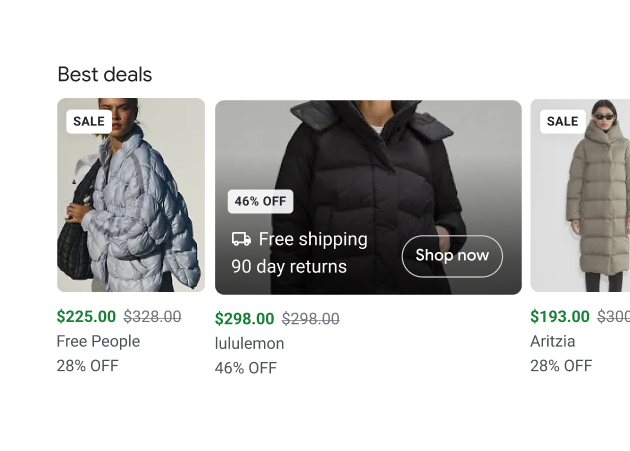

Smart Guides introduced a step-by-step discovery flow for high-consideration searches like travel — progressively refining results through contextual questions rather than requiring users to re-query.

Smart Filters reimagined product attribute filtering as a visually-driven, AI-assisted interface — letting users filter by color, mood, or use case in a way that felt like browsing a curated storefront, not operating a faceted search.

Smart Guides

Smart Guides

Working inside Google's constraints is a design exercise unlike almost any other. The most valuable skill I developed was holding both perspectives simultaneously — designing for the advertiser's ambition while genuinely protecting user trust. The best formats felt less like ads and more like features.

At this scale, design influence is as important as design execution. Many of these concepts shaped internal Google product conversations — the output wasn't always a shipped feature. Sometimes it was a shifted conversation. Knowing the difference between those two outcomes, and valuing both, is something I carried forward.